Building an AI-Powered Second Life Bot Through Collaborative Conversation

The Idea

I have been in Second Life for 18 years. In that time I have watched the platform evolve from a curiosity into a global community, and I have tried to build things that make it a little more welcoming along the way. My latest project is All Things In SL — a community hub with a website, forums, land rentals, and a growing set of tools that bridge the virtual world with the web.

One of those tools is an AI-powered bot that lives inside Second Life. You can send it an instant message from anywhere in the virtual world and get an intelligent, context-aware response powered by Anthropic’s Claude API. It remembers your conversations, it can restrict access to group members, and it even accepts Linden Dollar payments for token credits.

I did not build it alone. I built it in collaboration with Claude — not by pasting a single massive prompt and hoping for the best, but through a genuine back-and-forth process that stretched across multiple sessions, dozens of iterations, and more than a few laugh-out-loud debugging moments. This is the story of that process, and why I think it is a better model for building real things with AI than the “one-shot miracle prompt” approach that gets all the attention.

Why “One Prompt” Would Not Have Worked

Let me be upfront: this project could not have been built in a single prompt. Not because the AI is not capable — it absolutely is — but because I did not know everything I needed to know at the start. The architecture touches four different platforms (Second Life, SmartBots, my PHP server, and the Claude API), each with its own quirks, limitations, and documentation gaps. Some of those gaps I did not even know existed until we hit them.

Building something real means dealing with incomplete information, unexpected behavior, and decisions that only make sense once you see the previous step working. That is not a weakness of AI-assisted development — it is just how software gets built. The trick is having a collaborator who can keep up with the twists.

Setting the Stage: Research First, Build Second

The project started weeks before any code was written. I had been exploring SmartBots — a third-party service that hosts bot avatars in Second Life and provides APIs to control them. I uploaded their documentation to a Claude project: HTTP API docs, callback event references, scripting language guides, AI integration examples, the works. I wanted Claude to understand the ecosystem before I asked it to build anything.

This turned out to be one of the best decisions of the entire project. When I eventually said “I want to connect my SmartBots bot to the Claude API so people can have AI conversations inside Second Life,” Claude had already absorbed the documentation and could map out the architecture immediately:

The Three-Tier Architecture

1. Second Life — Avatars send instant messages to AllThingsInSLBot

2. SmartBots — Receives the IM, fires an HTTP callback to my PHP server

3. My PHP Server — Receives the callback, calls the Claude API, sends the reply back via the SmartBots IM API

But Claude also identified what we didn’t have yet. It flagged three specific documentation gaps: the exact POST parameters SmartBots sends in its callback payload, the IM sending syntax, and the inventory-giving API. Instead of guessing, it told me exactly what to look for. I went back, found the docs, and uploaded them. Research first, build second.

Phase 1: Making It Speak

The Build

With the documentation loaded, Claude produced the entire Phase 1 codebase: a config.php for credentials, a setup_callback.php to register with SmartBots, a test_connection.php to verify both APIs, and the main smartbots_claude_callback.php handler. Every file was commented, every decision was explained. It also produced a detailed setup guide and an LSL in-world script as an alternative registration method. The whole package was delivered as a ZIP.

The code was clean and well-organized. But then came the fun part: making it actually work on a real server, with real services, on a real computer that really did not want to cooperate.

The Silent Failure

I uploaded the files and ran php test_connection.php from the command line. Nothing happened. Not an error, not a success message — just a silent return to the prompt. I ran it four times. Nothing.

Claude walked me through a diagnostic process: syntax-check the config file, check for invisible BOM characters, verify PHP was even running. Each step was one message, one instruction, one result pasted back. We eventually traced it to a formatting issue with the heredoc block in the configuration — a PHP syntax feature where whitespace on the closing line causes a silent failure. In a one-prompt workflow, this would have been a dead end. In conversation, it was just another step.

The Laravel Roadblock

Next problem: the callback URL returned a 404. But not a server 404 — a Laravel 404. My website runs Laravel, and its .htaccess was routing every single request through Laravel’s front controller, including the /bot/ directory. SmartBots was POSTing to my server, Laravel was intercepting it, and my PHP callback never saw the request.

Claude recognized the Laravel 404 page instantly and provided a one-line fix for the .htaccess: RewriteRule ^bot/ - [L] — telling Apache to serve the bot directory directly without sending it through Laravel. One line, one save, problem solved.

The Computer Crashes

Throughout all of this, my computer kept crashing with NTFS filesystem errors. At one point I mentioned switching computers entirely. Claude’s response was unfazed: “That’s fine — the server-side stuff is all set. The bot will work whether you’re at your keyboard or not.” A small moment, but it captured something I appreciated about the collaboration — it stayed focused on the goal even when the circumstances were messy.

IMing the Wrong Bot

This is my favorite part of the story. After fixing the .htaccess, I opened Second Life and messaged the bot. I got a response from a SmartBots support agent named Justina asking if I needed help. I had accidentally IMed smartbots Resident (their support bot) instead of AllThingsInSLBot Resident (my bot).

“I need my glasses — I must have hit the support bot. I only have a few friends :)”

Claude diagnosed it instantly from the empty callback log. No IMs from my avatar had reached the server because none had been sent to the right bot. I apologized to Justina, found the right avatar, and tried again.

The Moment It Worked

And then, finally:

Metara Rune: Hello. What is the current UTC time?

AllThingsInSLBot: Hello Metara! The current UTC time is approximately 23:43 (11:43 PM). Is there anything about Second Life or the All Things In SL community I can help you with today?

That was Claude talking through my bot, in real time, inside a 20-year-old virtual world. The full pipeline — avatar to SmartBots to PHP to Claude to SmartBots to avatar — was working. It had taken multiple sessions, a documentation hunt, silent PHP failures, a Laravel routing conflict, computer crashes, messaging the wrong bot, and a field-name mismatch to get there. And every single one of those obstacles was solved through conversation, not through a better prompt.

Phase 2: Teaching It to Remember

Phase 1 proved the pipeline worked, but the bot was stateless — every conversation started from scratch. I wanted it to remember. If someone told the bot their favorite color, I wanted it to recall that three days later.

When I asked for Phase 2, I also mentioned that eventually I would want to track payments and token usage. Claude’s response showed something I came to appreciate about the collaborative approach: it didn’t just build what I asked for right now — it designed the database schema with future phases in mind. The bot_users table included fields for token_balance and total_tokens_used even though we wouldn’t touch billing until Phase 3. A bot_transactions table was created empty, ready and waiting.

This kind of forward thinking is where the iterative conversation model really shines. Because Claude had been part of the planning discussions, it knew what was coming and could build foundations that would not need to be torn up later. A one-shot prompt would have produced a schema for Phase 2 only.

The upgrade itself was smooth — create a MySQL database in cPanel, import the schema, update the config, upload the new files. I tested it by telling the bot my favorite color and then asking what it was in a follow-up message. It remembered. Phase 2 was live.

Phase 3: Access Control and Money

The final phase added three major features: tiered access control (everyone, group members only, or owner only), a blacklist system, and a Linden Dollar payment system for token credits.

I told Claude I wanted to toggle between access modes via IM commands, and that pricing would be based on L$200 per US dollar. Claude built a complete admin command system (!mode group, !stats, !billing on, !block, etc.) and a user command system (balance, buy, help) — all controlled via instant message from inside Second Life.

For the payment system, Claude anticipated the same documentation issue we had hit in Phase 1. The balance_changed callback from SmartBots might use different field names than documented, just like instant_message had used speaker_name instead of avatar_name. So the Phase 3 code was built to check multiple possible field names and included debug logging for the first payment received. We had learned from our earlier discovery and baked that lesson directly into the next iteration.

That is the real value of building collaboratively: the AI does not just remember what you built — it remembers what went wrong and why, and it carries those lessons forward.

What I Learned About Working with AI

1. Research Before You Build

The time I spent uploading SmartBots documentation before writing any code was the highest-return investment of the entire project. Claude could only be as good as the context I gave it. When I uploaded incomplete docs, it told me exactly what was missing. When I filled the gaps, it built with confidence.

2. Expect the Unexpected

Three of our biggest roadblocks — the silent PHP heredoc failure, the Laravel routing conflict — were things neither of us could have predicted from documentation alone. They only surfaced during real-world testing. The collaborative model handled this naturally: I reported what happened, Claude diagnosed why, and we fixed it together.

3. Test Each Step Before Moving On

Claude built test scripts into every phase. Connection tests, curl tests, logging statements. When something broke, we always had a diagnostic path. This is not glamorous work, but it is the difference between a project that ships and one that stalls.

4. Forward-Compatible Design Matters

Because Claude was part of the conversations about future plans, it could build Phase 2’s database with Phase 3’s billing columns already in place. No migrations, no restructuring. This kind of design requires context that only exists in an ongoing conversation.

5. The AI Catches What You Miss (and Vice Versa)

Claude caught things I would have missed — like the need for an .htaccess exception for the bot directory, or the importance of building fallback behavior when the database is unavailable. And I caught things Claude could not have known — like the fact that I was messaging the wrong bot, or that the SmartBots documentation did not match reality. Together, we covered ground that neither of us could have covered alone.

6. The Process Is the Product

The most useful thing about this project is not the final codebase. It is the documented record of how we got there — every obstacle, every decision, every workaround. Anyone building a similar integration can follow this path and avoid the pitfalls we already found. That record only exists because we built iteratively, in conversation, one prompt at a time.

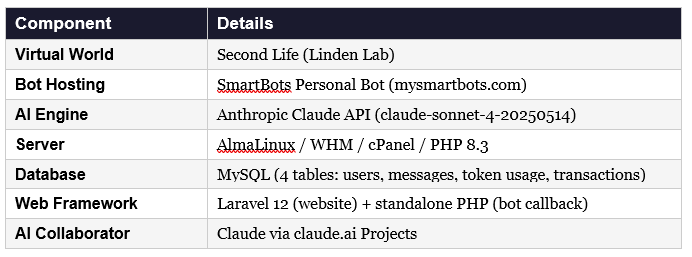

The Technical Stack

For anyone interested in building something similar, here is what powered this project:

One Prompt at a Time

There is a temptation in the AI era to treat language models like magic boxes. You write the perfect prompt, you get the perfect output, you move on. And for some tasks — generating a cover letter, summarizing a document, writing a regex — that works fine.

But for building real systems that touch real infrastructure and serve real people, the one-prompt approach breaks down. Real projects have incomplete documentation, silent failures, platform quirks, and design decisions that depend on context from three conversations ago. Real projects need a collaborator, not a calculator.

What I found, building this bot, is that the back-and-forth is the methodology. The research phase where Claude identified missing docs. The debugging sessions where we traced empty variables to undocumented field names. The forward-thinking database design that saved us a migration. The humor of messaging the wrong bot and apologizing to a bemused support agent. All of it contributed to a system that works, that I understand, and that I can extend.

If you are thinking about building something with AI — especially something that bridges multiple platforms or deals with third-party APIs — my advice is simple: do not try to get it all in one prompt. Upload your documentation. Ask questions. Build in phases. Test everything. Read the logs. And when your bot finally talks back to you from inside a 20-year-old virtual world, you will have earned that moment.

— — —

Drew is the founder of All Things In SL, a Second Life community hub.

allthingsinsl.com